Copyright Red Hat 1998 - 2015

This document is licensed under the "Creative Commons Attribution-ShareAlike (CC-BY-SA) 3.0" license.

This is the JGroups manual. It provides information about:

-

Installation and configuration

-

Using JGroups (the API)

-

Configuration of the JGroups protocols

The focus is on how to use JGroups, not on how JGroups is implemented.

Here are a couple of points I want to abide by throughout this book:

-

I like brevity. I will strive to describe concepts as clearly as possible (for a non-native English speaker) and will refrain from saying more than I have to to make a point.

-

I like simplicity. Keep It Simple and Stupid. This is one of the biggest goals I have both in writing this manual and in writing JGroups. It is easy to explain simple concepts in complex terms, but it is hard to explain a complex system in simple terms. I’ll try to do the latter.

So, how did it all start?

I spent 1998-1999 at the Computer Science Department at Cornell University as a post-doc, in Ken Birman’s group. Ken is credited with inventing the group communication paradigm, especially the Virtual Synchrony model. At the time they were working on their third generation group communication prototype, called Ensemble. Ensemble followed Horus (written in C by Robbert VanRenesse), which followed ISIS (written by Ken Birman, also in C). Ensemble was written in OCaml, developed at INRIA, which is a functional language and related to ML. I never liked the OCaml language, which in my opinion has a hideous syntax. Therefore I never got warm with Ensemble either.

However, Ensemble had a Java interface (implemented by a student in a semester project) which allowed me to program in Java and use Ensemble underneath. The Java part would require that an Ensemble process was running somewhere on the same machine, and would connect to it via a bidirectional pipe. The student had developed a simple protocol for talking to the Ensemble engine, and extended the engine as well to talk back to Java.

However, I still needed to compile and install the Ensemble runtime for each different platform, which is exactly why Java was developed in the first place: portability.

Therefore I started writing a simple framework (now JChannel), which would allow me to treat Ensemble as just another group communication transport, which could be replaced at any time by a pure Java solution. And soon I found myself working on a pure Java implementation of the group communication transport (now: ProtocolStack). I figured that a pure Java implementation would have a much bigger impact than something written in Ensemble. In the end I didn’t spend much time writing scientific papers that nobody would read anyway (I guess I’m not a good scientist, at least not a theoretical one), but rather code for JGroups, which could have a much bigger impact. For me, knowing that real-life projects/products are using JGroups is much more satisfactory than having a paper accepted at a conference/journal.

That’s why, after my time was up, I left Cornell and academia altogether, and started a job in the telecom industry in Silicon Valley.

At around that time (May 2000), SourceForge had just opened its site, and I decided to use it for hosting JGroups. This was a major boost for JGroups because now other developers could work on the code. From then on, the page hit and download numbers for JGroups have steadily risen.

In the fall of 2002, Sacha Labourey contacted me, letting me know that JGroups was being used by JBoss for their clustering implementation. I joined JBoss in 2003 and have been working on JGroups and JBossCache. My goal is to make JGroups the most widely used clustering software in Java …

I want to thank all contributors to JGroups, present and past, for their work. Without you, this project would never have taken off the ground.

I also want to thank Ken Birman and Robbert VanRenesse for many fruitful discussions of all aspects of group communication in particular and distributed systems in general.

I want to dedicate this manual to Jeannette, Michelle and Nicole.

Bela Ban, San Jose, Aug 2002, Kreuzlingen Switzerland 2014

1. Overview

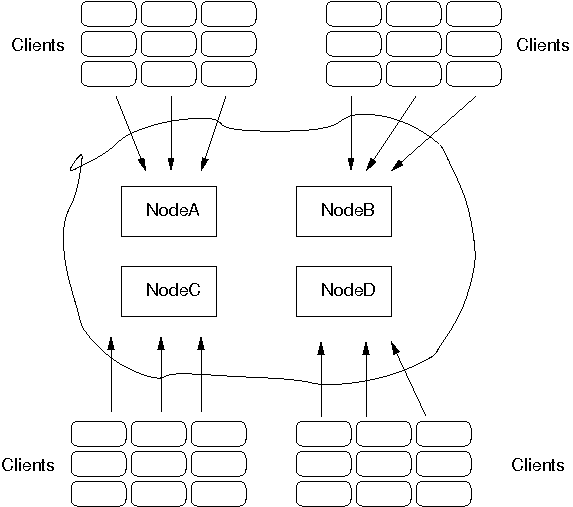

Group communication uses the terms group and member. Members are part of a group. In the more common terminology, a member is a node and a group is a cluster. We use these terms interchangeably.

A node is a process, residing on some host. A cluster can have one or more nodes belonging to it. There can be multiple nodes on the same host, and all may or may not be part of the same cluster. Nodes can of course also run on different hosts.

JGroups is toolkit for reliable group communication. Processes can join a group, send messages to all members or single members and receive messages from members in the group. The system keeps track of the members in every group, and notifies group members when a new member joins, or an existing member leaves or crashes. A group is identified by its name. Groups do not have to be created explicitly; when a process joins a non-existing group, that group will be created automatically. Processes of a group can be located on the same host, within the same LAN, or across a WAN. A member can be part of multiple groups.

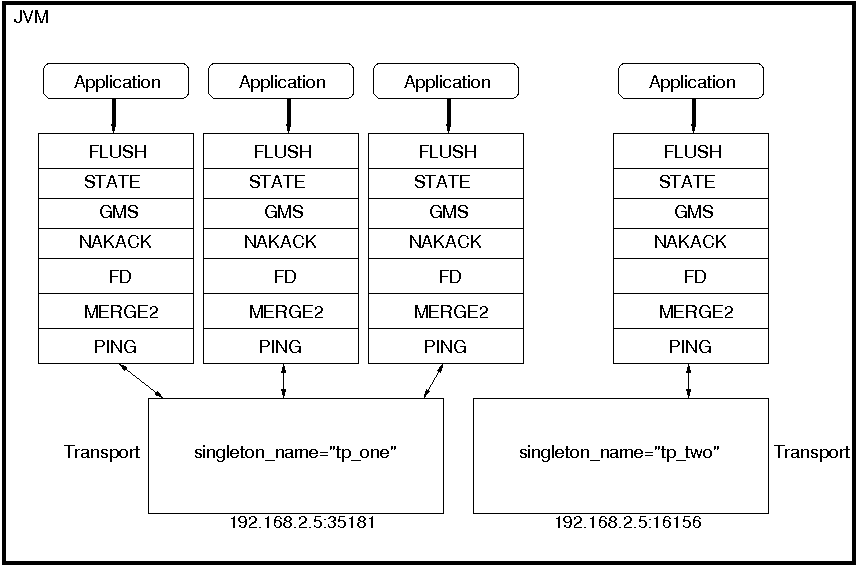

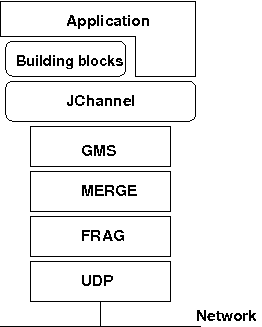

The architecture of JGroups is shown in The architecture of JGroups.

It consists of 3 parts: (1) the Channel used by application programmers to build reliable group communication applications, (2) the building blocks, which are layered on top of the channel and provide a higher abstraction level and (3) the protocol stack, which implements the properties specified for a given channel.

This document describes how to install and use JGroups, ie. the Channel API and the building blocks. The targeted audience is application programmers who want to use JGroups to build reliable distributed programs that need group communication.

A channel is connected to a protocol stack. Whenever the application sends a message, the channel passes it on to the protocol stack, which passes it to the topmost protocol. The protocol processes the message and the passes it down to the protocol below it. Thus the message is handed from protocol to protocol until the bottom (transport) protocol puts it on the network. The same happens in the reverse direction: the transport protocol listens for messages on the network. When a message is received it will be handed up the protocol stack until it reaches the channel. The channel then invokes the receive() callback in the application to deliver the message.

When an application connects to the channel, the protocol stack will be started, and when it disconnects the stack will be stopped. When the channel is closed, the stack will be destroyed, releasing its resources.

The following three sections give an overview of channels, building blocks and the protocol stack.

1.1. Channel

To join a group and send messages, a process has to create a channel and connect to it using the group name (all channels with the same name form a group). The channel is the handle to the group. While connected, a member may send and receive messages to/from all other group members. The client leaves a group by disconnecting from the channel. A channel can be reused: clients can connect to it again after having disconnected. However, a channel allows only 1 client to be connected at a time. If multiple groups are to be joined, multiple channels can be created and connected to. A client signals that it no longer wants to use a channel by closing it. After this operation, the channel cannot be used any longer.

Each channel has a unique address. Channels always know who the other members are in the same group: a list of member addresses can be retrieved from any channel. This list is called a view. A process can select an address from this list and send a unicast message to it (also to itself), or it may send a multicast message to all members of the current view (also including itself). Whenever a process joins or leaves a group, or when a crashed process has been detected, a new view is sent to all remaining group members. When a member process is suspected of having crashed, a suspicion message is received by all non-faulty members. Thus, channels receive regular messages, and view and suspicion notifications.

The properties of a channel are typically defined in an XML file, but JGroups also allows for configuration through simple strings, URIs, DOM trees or even programmatically.

The Channel API and its related classes is described in the API section.

1.2. Building Blocks

Channels are simple and primitive. They offer the bare functionality of group communication, and have been designed after the simple model of sockets, which are widely used and well understood. The reason is that an application can make use of just this small subset of JGroups, without having to include a whole set of sophisticated classes, that it may not even need. Also, a somewhat minimalistic interface is simple to understand: a client needs to know about 5 methods to be able to create and use a channel.

Channels provide asynchronous message sending/reception, somewhat similar to UDP. A message sent is essentially put on the network and the send() method will return immediately. Conceptual requests, or responses to previous requests, are received in undefined order, and the application has to take care of matching responses with requests.

JGroups offers building blocks that provide more sophisticated APIs on top of a Channel. Building blocks either create and use channels internally, or require an existing channel to be specified when creating a building block. Applications communicate directly with the building block, rather than the channel. Building blocks are intended to save the application programmer from having to write tedious and recurring code, e.g. request-response correlation, and thus offer a higher level of abstraction to group communication.

Building blocks are described in Building Blocks.

1.3. The Protocol Stack

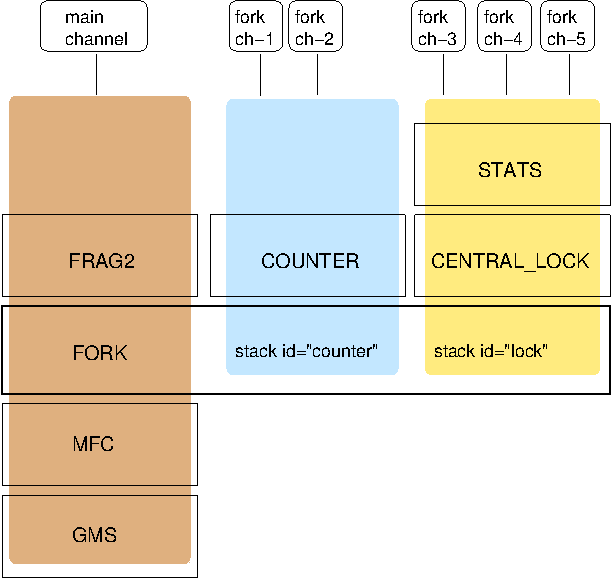

The protocol stack containins a number of protocol layers in a bidirectional list. All messages sent and received over the channel have to pass through all protocols. Every layer may modify, reorder, pass or drop a message, or add a header to a message. A fragmentation layer might break up a message into several smaller messages, adding a header with an id to each fragment, and re-assemble the fragments on the receiver’s side.

The composition of the protocol stack, i.e. its protocols, is determined by the creator of the channel: an XML file defines the protocols to be used (and the parameters for each protocol). The configuration is then used to create the stack.

Knowledge about the protocol stack is not necessary when only using channels in an application. However, when an application wishes to ignore the default properties for a protocol stack, and configure their own stack, then knowledge about what the individual layers are supposed to do is needed.

2. Installation and configuration

The installation refers to version 3.x of JGroups. Refer to the installation instructions (INSTALL.html) that are shipped with the JGroups version you downloaded for details.

The JGroups JAR can be downloaded from SourceForge.

It is named jgroups-x.y.z, where x=major, y=minor and z=patch version, for example jgroups-3.0.0.Final.jar.

The JAR is all that’s needed to get started using JGroups; it contains all core, demo and (selected) test

classes, the sample XML configuration files and the schema.

The source code is hosted on GitHub. To build JGroups,

ANT is currently used. In Building JGroups from source we’ll show how to build JGroups from source.

2.1. Requirements

-

JGroups up to (and including) 3.5.0.Final requires JDK 6.

-

JGroups 3.6.x to (excluding) 4.0 requires JDK 7.

-

JGroups 4.0 will require JDK 8.

-

There is no JNI code present so JGroups should run on all platforms.

-

Logging: by default, JGroups tries to use log4j2. If the classes are not found on the classpath, it resorts to log4j, and if still not found, it falls back to java.util.logging logger. See Logging for details on log configuration.

2.2. Structure of the source version

The source version consists of the following directories and files:

- src

-

the sources

- tests

-

unit and stress tests

- lib

-

JARs needed to either run the unit tests, or build the manual etc. No JARs from here are required at runtime! Note that these JARs are downloaded automatically via ivy.

- conf

-

configuration files needed by JGroups, plus default protocol stack definitions

- doc

-

documentation

2.3. Building JGroups from source

-

Download the sources from GitHub, either via git clone, or the download link into a directory

JGroups, e.g./home/bela/JGroups. -

Download ant (preferably 1.8.x or higher)

-

Change to the

JGroupsdirectory -

Run

ant -

This will compile all Java files (into the

classesdirectory). Note that if thelibdirectory doesn’t exist, ant will download ivy intoliband then use ivy to download the dependent libraries defined inivy.xml. -

To generate the JGroups JAR:

ant jar -

This will generate the following JAR files in the

distdirectory:-

jgroups-x.y.z.jar: the JGroups JAR -

jgroups-sources.jar: the source code for the core classes and demos

-

-

Now add the following directories to the classpath:

-

JGroups/classes -

JGroups/conf -

All needed JAR files in

JGroups/lib. Note that most JARs inlibare only required for running unit tests and generating test reports

-

-

To generate JavaDocs simple run:

ant javadocand the Javadoc documentation will be generated indist/javadoc

2.4. Logging

JGroups has no runtime dependencies; all that’s needed to use it is to have jgroups.jar on the classpath. For logging, this means the JVM’s logging (java.util.logging) is used.

However, JGroups can use any other logging framework. By default, log4j and log4j2 are supported if the corresponding JARs are found on the classpath.

2.4.1. log4j2

To use log4j2, the API and CORE JARs have to be found on the

classpath. There’s an XML configuration for log4j2 in the conf dir, which can be used e.g. via

-Dlog4j.configurationFile=$JGROUPS/conf/log4j2.xml.

log4j2 is currently the preferred logging library used by JGroups, and will be used even if the log4j JAR is also present on the classpath.

2.4.2. log4j

To use log4j, the log4j JAR has to be found on the classpath. Note though that

if the log4j2 API and CORE JARs are found, then log4j2 will be used, so those JARs will have to be removed if log4j

is to be used. There’s an XML configuration for log4j in the conf dir, which can be used e.g. via

-Dlog4j.configuration=file:$JGROUPS/conf/log4j.properties.

2.4.3. JDK logging (JUL)

To force use of JDK logging, even if the log4j(2) JARs are present, -Djgroups.use.jdk_logger=true can be used.

2.4.4. Support for custom logging frameworks

JGroups allows custom loggers to be used instead of the ones supported by default. To do this, interface

CustomLogFactory has to be implemented:

public interface CustomLogFactory {

Log getLog(Class clazz);

Log getLog(String category);

}The implementation needs to return an implementation of org.jgroups.logging.Log.

To use the custom log, LogFactory.setCustomLogFactory(CustomLogFactory f) needs to be called.

2.5. Testing your setup

To see whether your system can find the JGroups classes, execute the following command:

java org.jgroups.Version

or

java -jar jgroups-x.y.z.jar

You should see the following output (more or less) if the class is found:

$ java org.jgroups.Version Version: 3.5.0.Final

2.6. Running a demo program

To test whether JGroups works okay on your machine, run the following command twice:

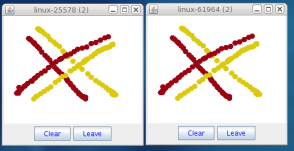

java -Djava.net.preferIPv4Stack=true org.jgroups.demos.Draw

2 whiteboard windows should appear as shown in Screenshot of 2 Draw instances.

If you started them simultaneously, they could initially show a membership of 1 in their title bars. After some time, both windows should show 2. This means that the two instances found each other and formed a cluster.

When drawing in one window, the second instance should also be updated. As the default group transport uses IP multicast, make sure that - if you want start the 2 instances in different subnets - IP multicast is enabled. If this is not the case, the 2 instances won’t find each other and the example won’t work.

You can change the properties of the demo to for example use

a different transport if multicast doesn’t work (it should always

work on the same machine). Please consult the documentation to see how to do this.

State transfer (see the section in the API later) can also be tested by passing the -state flag to Draw.

2.7. Using IP Multicasting without a network connection

Sometimes there isn’t a network connection (e.g. DSL modem is down), or we want to multicast only on the local machine. For this the loopback interface (typically lo) can be configured, e.g.

route add -net 224.0.0.0 netmask 240.0.0.0 dev lo

This means that all traffic directed to the 224.0.0.0 network will be sent to the loopback interface, which means it

doesn’t need any network to be running. Note that the 224.0.0.0 network is a placeholder for all multicast addresses

in most UNIX implementations: it will catch all multicast traffic.

The above instructions may also work for Windows systems, but this hasn’t been tested. Note that not all operating systems allow multicast traffic to use the loopback interface.

Typical home networks have a gateway/firewall with 2 NICs:

the first (e.g. eth0) is connected to the outside world (Internet

Service Provider), the second (eth1) to the internal network, with

the gateway firewalling/masquerading traffic between the internal

and external networks. If no route for multicast traffic is added,

the default will be to use the fdefault gateway, which will

typically direct the multicast traffic towards the ISP. To prevent

this (e.g. ISP drops multicast traffic, or latency is too high),

we recommend to add a route for multicast traffic which goes to

the internal network (e.g. eth1).

2.8. It doesn’t work!

|

|

The section below refers to JGroups versions prior to 3.5. In 3.5 and later versions, mcast (see below) should be used.

|

Make sure your machine is set up correctly for IP multicasting. There are 2 test programs that can be used to detect

this: McastReceiverTest and McastSenderTest. Start McastReceiverTest, e.g.

java org.jgroups.tests.McastReceiverTest

Then start McastSenderTest:

java org.jgroups.tests.McastSenderTest

If you want to bind to a specific network interface card (NIC), use -bind_addr 192.168.0.2, where 192.168.0.2

is the IP address of the NIC to which you want to bind. Use this parameter in both sender and receiver.

You should be able to type in the McastSenderTest window and

see the output in the McastReceiverTest. If not, try to use -ttl 32 in the sender. If this still fails,

consult a system administrator to help you setup IP multicast correctly. If you are

the system administrator, look for another job :-)

Other means of getting help: there is a public forum on JIRA for questions. Also consider subscribing to the javagroups-users mailing list to discuss such and other problems.

2.8.1. mcast

Instead of McastSender and McastReceiver, a single program mcast can be used. Start multiple instances of it.

The options are:

-bind_addr-

the network interface to bind to for the receiver. If null,

mcastwill join all available interfaces -port-

the local port to use. If 0, an ephemeral port will be picked

-mcast_addr-

the multicast address to join

-mcast_port-

the port to listen on for multicasts

-ttl-

The TTL (for sending of packets)

2.9. Problems with IPv6

Another source of problems might be the use of IPv6, and/or misconfiguration of /etc/hosts. If you communicate between

an IPv4 and an IPv6 host, and they are not able to find each other, try the -Djava.net.preferIP4Stack=true

property, e.g.

java -Djava.net.preferIPv4Stack=true org.jgroups.demos.Draw -props /home/bela/udp.xml

The JDK uses IPv6 by default, although is has a dual stack, that is, it also supports IPv4. Here’s more details on the subject.

2.10. Wiki

There is a wiki which lists FAQs and their solutions at http://www.jboss.org/wiki/Wiki.jsp?page=JGroups. It is frequently updated and a useful companion to this manual.

2.11. I have discovered a bug!

If you think that you discovered a bug, submit a bug report on JIRA or send email to the jgroups-users mailing list if you’re unsure about it. Please include the following information: - [x] Version of JGroups (java org.jgroups.Version) - [x] Platform (e.g. Solaris 8) - [ ] Version of JDK (e.g. JDK 1.6.20_52) - [ ] Stack trace in case of a hang. Use kill -3 PID on UNIX systems or CTRL-BREAK on windows machines - [x] Small program that reproduces the bug (if it can be reproduced)

2.12. Supported classes

JGroups project has been around since 1998. Over this time, some of the JGroups classes have been used in experimental phases and have never been matured enough to be used in today’s production releases. However, they were not removed since some people used them in their products.

The following tables list unsupported and experimental classes. These classes are not actively maintained, and we will not work to resolve potential issues you might find. Their final faith is not yet determined; they might even be removed altogether in the next major release. Weight your risks if you decide to use them anyway.

2.12.1. Experimental classes

| Package | Class |

|---|---|

org.jgroups.blocks |

GridOutputStream |

org.jgroups.blocks |

GridInputStream |

org.jgroups.blocks |

GridFile |

org.jgroups.blocks |

PartitionedHashMap |

org.jgroups.blocks |

Cache |

org.jgroups.blocks |

GridFilesystem |

org.jgroups.util |

HashedTimingWheel |

org.jgroups.auth |

Krb5Token |

org.jgroups.client |

StompConnection |

org.jgroups.protocols |

DAISYCHAIN |

org.jgroups.protocols |

FD_ALL2 |

org.jgroups.protocols |

TCP_NIO |

org.jgroups.protocols |

SEQUENCER2 |

org.jgroups.protocols |

ABP |

org.jgroups.protocols |

SWIFT_PING |

org.jgroups.protocols |

SHUFFLE |

org.jgroups.protocols |

PRIO |

org.jgroups.protocols |

RATE_LIMITER |

org.jgroups.protocols |

TUNNEL |

2.12.2. Unsupported classes

| Package | Class |

|---|---|

org.jgroups.blocks |

ReplicatedTree |

org.jgroups.blocks |

PartitionedHashMap |

org.jgroups.blocks |

Cache |

org.jgroups.util |

HashedTimingWheel |

org.jgroups.protocols |

EXAMPLE |

org.jgroups.protocols |

TCP_NIO |

org.jgroups.protocols |

DISCARD_PAYLOAD |

org.jgroups.protocols |

ABP |

org.jgroups.protocols |

FD_PING |

org.jgroups.protocols |

DISCARD |

3. API

This chapter explains the classes available in JGroups that will be used by applications to build reliable group communication applications. The focus is on creating and using channels.

Information in this document may not be up-to-date, but the nature of the classes in JGroups

described here is the same. For the most up-to-date information refer to the Javadoc-generated documentation in

the doc/javadoc directory.

All of the classes discussed here are in the org.jgroups package unless otherwise mentioned.

3.1. Utility classes

The org.jgroups.util.Util class contains useful common functionality which cannot be assigned to any other package.

3.1.1. objectToByteBuffer(), objectFromByteBuffer()

The first method takes an object as argument and serializes it into a byte buffer (the object has to be serializable or externalizable). The byte array is then returned. This method is often used to serialize objects into the byte buffer of a message. The second method returns a reconstructed object from a buffer. Both methods throw an exception if the object cannot be serialized or unserialized.

3.1.2. objectToStream(), objectFromStream()

The first method takes an object and writes it to an output stream. The second method takes an input stream and reads an object from it. Both methods throw an exception if the object cannot be serialized or unserialized.

3.2. Interfaces

These interfaces are used with some of the APIs presented below, therefore they are listed first.

3.2.1. MessageListener

The MessageListener interface below provides callbacks for message reception and for providing and setting the state:

public interface MessageListener {

void receive(Message msg);

void getState(OutputStream output) throws Exception;

void setState(InputStream input) throws Exception;

}Method receive() is called whenever a message is received. The getState() and setState() methods are used to

fetch and set the group state (e.g. when joining). Refer to State transfer for a discussion of

state transfer.

3.2.2. MembershipListener

The MembershipListener interface is similar to the MessageListener interface above: every time a new view, a suspicion message,

or a block event is received, the corresponding method of the class implementing MembershipListener will be called.

public interface MembershipListener {

void viewAccepted(View new_view);

void suspect(Object suspected_mbr);

void block();

void unblock();

}Oftentimes the only callback that needs to be implemented will be

viewAccepted() which notifies the receiver that a new member has joined the

group or that an existing member has left or crashed. The suspect()

callback is invoked by JGroups whenever a member if suspected of having crashed, but not yet excluded

[1].

The block() method is called to notify the member that it will soon be blocked

sending messages. This is done by the FLUSH protocol, for example to ensure that nobody is sending

messages while a state transfer or view installation is in progress. When block() returns, any thread

sending messages will be blocked, until FLUSH unblocks the thread again, e.g. after the state has been

transferred successfully.

Therefore, block() can be used to send pending messages or complete some other work.

Note that block() should be brief, or else the entire FLUSH protocol is blocked.

The unblock() method is called to notify the member that the FLUSH protocol has completed and the member can resume

sending messages. If the member did not stop sending messages on block(), FLUSH simply blocked them and

will resume, so no action is required from a member. Implementation of the unblock() callback is optional.

|

|

Note that it is oftentimes simpler to extend ReceiverAdapter (see below) and implement the needed

callbacks than to implement all methods of both of these interfaces, as most callbacks are not needed.

|

3.2.3. Receiver

public interface Receiver extends MessageListener, MembershipListener;A Receiver can be used to receive messages and view changes; receive() will be invoked as soon as a

message has been received, and viewAccepted() will be called whenever a new view is installed.

3.2.4. ReceiverAdapter

This class implements Receiver with no-op implementations. When implementing a callback, we can simply extend ReceiverAdapter and overwrite receive() in order to not having to implement all callbacks of the interface.

ReceiverAdapter looks as follows:

public class ReceiverAdapter implements Receiver {

void receive(Message msg) {}

void getState(OutputStream output) throws Exception {}

void setState(InputStream input) throws Exception {}

void viewAccepted(View view) {}

void suspect(Address mbr) {}

void block() {}

void unblock() {}

}A ReceiverAdapter is the recommended way to implement callbacks.

|

|

Note that anything that could block should not be done in a callback. This includes sending of messages;

if we have FLUSH on the stack, and send a message in a viewAccepted() callback, then the following happens:

the FLUSH protocol blocks all

(multicast) messages before installing a view, then installs the view, then unblocks. However,

because installation of the view triggers the viewAccepted() callback, sending of messages inside of

viewAccepted() will block. This in turn blocks the viewAccepted() thread, so the flush will never return! If we need to send a message in a callback, the sending should be done on a separate thread, or a timer task should be submitted to the timer. |

3.2.5. ChannelListener

public interface ChannelListener {

void channelConnected(Channel channel);

void channelDisconnected(Channel channel);

void channelClosed(Channel channel);

}A class implementing ChannelListener can use the Channel.addChannelListener() method to register with a channel to obtain information about state changes in a channel. Whenever a channel is closed, disconnected or opened, the corresponding callback will be invoked.

3.3. Address

Each member of a group has an address, which uniquely identifies the member. The interface for such an

address is Address, which requires concrete implementations to provide methods such as comparison and

sorting of addresses. JGroups addresses have to implement the following interface:

public interface Address extends Externalizable, Comparable, Cloneable {

int size();

}For marshalling purposes, size() needs to return the number of bytes an instance of an address implementation

takes up in serialized form.

|

|

Please never use implementations of Address directly; Address should always be used as an opaque identifier of a cluster node! |

Actual implementations of addresses are often generated by the bottommost protocol layer (e.g. UDP or

TCP). This allows for all possible sorts of addresses to be used with JGroups.

Since an address uniquely identifies a channel, and therefore a group member, it can be used to send messages to that group member, e.g. in Messages (see next section).

The default implementation of Address is org.jgroups.util.UUID. It uniquely identifies

a node, and when disconnecting and reconnecting to a cluster, a node is given a new UUID on reconnection.

UUIDs are never shown directly, but are usually shown as a logical name (see Logical names). This is a name given to a node either via the user or via JGroups, and its sole purpose is to make logging output a bit more readable.

UUIDs maps to IpAddresses, which are IP addresses and ports. These are eventually used by the transport protocol to send a message.

3.4. Message

Data is sent between members in the form of messages (org.jgroups.Message).

A message can be sent by a member to a single member, or to

all members of the group of which the channel is an endpoint.

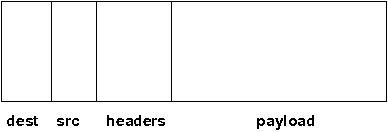

The structure of a message is shown in Structure of a message.

A message has 5 fields:

- Destination address

-

The address of the receiver. If

null, the message will be sent to all current group members.Message.getDest()returns the destination address of a message. - Source address

-

The address of the sender. Can be

null, and will be filled in by the transport protocol (e.g. UDP) before the message is put on the network.Message.getSrc()returns the source address, ie. the address of the sender of a message. - Flags

-

This is one byte used for flags. The currently recognized flags are

OOB,DONT_BUNDLE,NO_FC,NO_RELIABILITY,NO_TOTAL_ORDER,NO_RELAYandRSVP. ForOOB, see the discussion on the concurrent stack. For the use of flags see the message flags. - Payload

-

The actual data (as a byte buffer). The

Messageclass contains convenience methods to set a serializable object and to retrieve it again, using serialization to convert the object to/from a byte buffer. A message also has an offset and a length, if the buffer is only a subrange of a larger buffer. - Headers

-

A list of headers that can be attached to a message. Anything that should not be in the payload can be attached to a message as a header. Methods

putHeader(),getHeader()andremoveHeader()of Message can be used to manipulate headers.

Note that headers are only used by protocol implementers; headers should not be added or removed by application code!

A message is similar to an IP packet and consists of the payload (a byte buffer) and the addresses of the sender and receiver (as Addresses). Any message put on the network can be routed to its destination (receiver address), and replies can be returned to the sender’s address.

A message usually does not need to fill in the sender’s address when sending a message; this is done automatically by the protocol stack before a message is put on the network. However, there may be cases, when the sender of a message wants to give an address different from its own, so that for example, a response should be returned to some other member.

The destination address (receiver) can be an Address, denoting the address of a member, determined e.g.

from a message received previously, or it can be null, which means that the message

will be sent to all members of the group. A typical multicast message, sending string

"Hello" to all members would look like this:

Message msg=new Message(null, "Hello");

channel.send(msg);3.5. Header

A header is a custom bit of information that can be added to each message. JGroups uses headers extensively, for example to add sequence numbers to each message (NAKACK and UNICAST), so that those messages can be delivered in the order in which they were sent.

3.6. Event

Events are means by which JGroups protcols can talk to each other. Contrary to Messages, which travel over the network between group members, events only travel up and down the stack.

|

|

Headers and events are only used by protocol implementers; they are not needed by application code! |

3.7. View

A view (org.jgroups.View) is a list of the current members of a group. It consists

of a ViewId, which uniquely identifies the view (see below), and a list of members.

Views are installed in a channel automatically by the underlying protocol stack whenever a new member joins

or an existing one leaves (or crashes). All members of a group see the same sequence of views.

Note that the first member of a view is the coordinator (the one who emits new views). Thus, whenever the membership changes, every member can determine the coordinator easily and without having to contact other members, by picking the first member of a view.

The code below shows how to send a (unicast) message to the first member of a view (error checking code omitted):

View view=channel.getView();

Address first=view.getMembers().get(0);

Message msg=new Message(first, "Hello world");

channel.send(msg);Whenever an application is notified that a new view has been installed (e.g. by

Receiver.viewAccepted(), the view is already set in the channel. For example,

calling Channel.getView() in a viewAccepted()

callback would return the same view (or possibly the next one in case there has already been a new view!).

3.7.1. ViewId

The ViewId is used to uniquely number views. It consists of the address of the view creator and a

sequence number. ViewIds can be compared for equality and put in a hashmaps as they implement equals()

and hashCode().

|

|

Note that the latter 2 methods only take the ID into account. |

3.7.2. MergeView

Whenever a group splits into subgroups, e.g. due to a network partition, and later the subgroups merge

back together, a MergeView instead of a View will be received by the application. The MergeView is

a subclass of View and contains as additional instance variable the list of views that were merged. As

an example if the group denoted by view V1:(p,q,r,s,t) split into subgroups

V2:(p,q,r) and V2:(s,t), the merged view might be

V3:(p,q,r,s,t). In this case the MergeView would contains a list of 2 views:

V2:(p,q,r) and V2:(s,t).

3.8. JChannel

In order to join a group and send messages, a process has to create a channel. A channel is like a socket. When a client connects to a channel, it gives the the name of the group it would like to join. Thus, a channel is (in its connected state) always associated with a particular group. The protocol stack takes care that channels with the same group name find each other: whenever a client connects to a channel given group name G, then it tries to find existing channels with the same name, and joins them, resulting in a new view being installed (which contains the new member). If no members exist, a new group will be created.

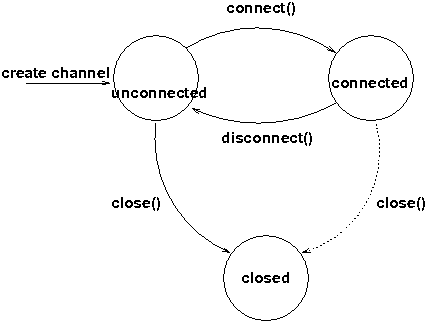

A state transition diagram for the major states a channel can assume are shown in [ChannelStatesFig].

When a channel is first created, it is in the unconnected state.

An attempt to perform certain operations which are only valid in the connected state (e.g. send/receive messages) will result in an exception.

After a successful connection by a client, it moves to the connected state. Now the channel will receive messages from other members and may send messages to other members or to the group, and it will get notified when new members join or leave. Getting the local address of a channel is guaranteed to be a valid operation in this state (see below).

When the channel is disconnected, it moves back to the unconnected state. Both a connected and unconnected channel may be closed, which makes the channel unusable for further operations. Any attempt to do so will result in an exception. When a channel is closed directly from a connected state, it will first be disconnected, and then closed.

The methods available for creating and manipulating channels are discussed now.

3.8.1. Creating a channel

A channel is created using one of its public constructors (e.g. new JChannel()).

The most frequently used constructor of JChannel looks as follows:

public JChannel(String props) throws Exception;The props argument points to an XML file containing the configuration of the protocol stack to be used. This can be a String, but there are also other constructors which take for example a DOM element or a URL (see the javadoc for details).

The code sample below shows how to create a channel based on an XML configuration file:

JChannel ch=new JChannel("/home/bela/udp.xml");If the props argument is null, the default properties will be used. An exception will be thrown if the channel cannot be created. Possible causes include protocols that were specified in the property argument, but were not found, or wrong parameters to protocols.

For example, the Draw demo can be launched as follows:

java org.javagroups.demos.Draw -props file:/home/bela/udp.xml

or

java org.javagroups.demos.Draw -props http://www.jgroups.org/udp.xml

In the latter case, an application downloads its protocol stack specification from a server, which allows for central administration of application properties.

A sample XML configuration looks like this (edited from udp.xml):

<config xmlns="urn:org:jgroups"

xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance"

xsi:schemaLocation="urn:org:jgroups http://www.jgroups.org/schema/jgroups.xsd">

<UDP

mcast_port="${jgroups.udp.mcast_port:45588}"

ucast_recv_buf_size="20M"

ucast_send_buf_size="640K"

mcast_recv_buf_size="25M"

mcast_send_buf_size="640K"

loopback="true"

discard_incompatible_packets="true"

max_bundle_size="64K"

max_bundle_timeout="30"

ip_ttl="${jgroups.udp.ip_ttl:2}"

enable_diagnostics="true"

thread_pool.enabled="true"

thread_pool.min_threads="2"

thread_pool.max_threads="8"

thread_pool.keep_alive_time="5000"

thread_pool.queue_enabled="true"

thread_pool.queue_max_size="10000"

thread_pool.rejection_policy="discard"

oob_thread_pool.enabled="true"

oob_thread_pool.min_threads="1"

oob_thread_pool.max_threads="8"

oob_thread_pool.keep_alive_time="5000"

oob_thread_pool.queue_enabled="false"

oob_thread_pool.queue_max_size="100"

oob_thread_pool.rejection_policy="Run"/>

<PING timeout="2000"

num_initial_members="3"/>

<MERGE3 max_interval="30000"

min_interval="10000"/>

<FD_SOCK/>

<FD_ALL/>

<VERIFY_SUSPECT timeout="1500" />

<BARRIER />

<pbcast.NAKACK use_stats_for_retransmission="false"

exponential_backoff="0"

use_mcast_xmit="true"

retransmit_timeout="300,600,1200"

discard_delivered_msgs="true"/>

<UNICAST timeout="300,600,1200"/>

<pbcast.STABLE stability_delay="1000" desired_avg_gossip="50000"

max_bytes="4M"/>

<pbcast.GMS print_local_addr="true" join_timeout="3000"

view_bundling="true"/>

<UFC max_credits="2M"

min_threshold="0.4"/>

<MFC max_credits="2M"

min_threshold="0.4"/>

<FRAG2 frag_size="60K" />

<pbcast.STATE_TRANSFER />

</config>A stack is wrapped by <config> and </config> elements and lists all protocols from bottom

(UDP) to top (STATE_TRANSFER). Each element defines one protocol.

Each protocol is implemented as a Java class. When a protocol stack is created based on the above XML configuration, the first element ("UDP") becomes the bottom-most layer, the second one will be placed on the first, etc: the stack is created from the bottom to the top.

Each element has to be the name of a Java class that resides in the org.jgroups.protocols package.

Note that only the base name has to be given, not the fully specified class name

(UDP instead of org.jgroups.protocols.UDP).

If the protocol class is not found, JGroups assumes that the name given is a fully qualified classname

and will therefore try to instantiate that class. If this does not work an exception is thrown.

This allows for protocol classes to reside in different packages altogether, e.g. a valid protocol name

could be com.sun.eng.protocols.reliable.UCAST.

Each layer may have zero or more arguments, which are specified as a list of name/value pairs in

parentheses directly after the protocol name. In the example above, UDP is configured with some options,

one of them being the IP multicast port (mcast_port) which is set to 45588, or to the value of

the system property jgroups.udp.mcast_port, if set.

|

|

Note that all members in a group have to have the same protocol stack. |

Programmatic creation

Usually, channels are created by passing the name of an XML configuration file to the JChannel() constructor. On top of this declarative configuration, JGroups provides an API to create a channel programmatically.

The way to do this is to first create a JChannel, then an instance of

ProtocolStack, then add all desired protocols to the stack and finally calling init() on the stack

to set it up. The rest, e.g. calling JChannel.connect() is the same as with the declarative

creation.

An example of how to programmatically create a channel is shown below (copied from ProgrammaticChat):

public class ProgrammaticChat {

public static void main(String[] args) throws Exception {

JChannel ch=new JChannel(false); // (1)

ProtocolStack stack=new ProtocolStack(); // (2)

ch.setProtocolStack(stack);

stack.addProtocol(new UDP().setValue("bind_addr",

InetAddress.getByName("192.168.1.5")))

.addProtocol(new PING())

.addProtocol(new MERGE3())

.addProtocol(new FD_SOCK())

.addProtocol(new FD_ALL().setValue("timeout", 12000)

.setValue("interval", 3000))

.addProtocol(new VERIFY_SUSPECT())

.addProtocol(new BARRIER())

.addProtocol(new NAKACK())

.addProtocol(new UNICAST2())

.addProtocol(new STABLE())

.addProtocol(new GMS())

.addProtocol(new UFC())

.addProtocol(new MFC())

.addProtocol(new FRAG2()); // (3)

stack.init(); // (4)

ch.setReceiver(new ReceiverAdapter() {

public void viewAccepted(View new_view) {

System.out.println("view: " + new_view);

}

public void receive(Message msg) {

Address sender=msg.getSrc();

System.out.println(msg.getObject() + " [" + sender + "]");

}

});

ch.connect("ChatCluster");

for(;;) {

String line=Util.readStringFromStdin(": ");

ch.send(null, line);

}

}

}First a JChannel is created (1). The false argument tells the channel not to create a ProtocolStack.

This is needed because we will create one ourselves later and set it in the channel (2).

Next, all protocols are added to the stack (3). Note that the order is from bottom (transport protocol)

to top. So UDP as transport is added first, then PING and so on, until FRAG2, which is the top

protocol. Every protocol can be configured via setters, but there is also a generic

setValue(String attr_name, Object value), which can be used to configure protocols as well, as shown in the example.

Once the stack is configured, we call ProtocolStack.init() to link all protocols correctly and to

call init() in every protocol instance (4). After this, the channel is ready to be used and all

subsequent actions (e.g. connect()) can be executed. When the init() method returns, we have

essentially the equivalent of new JChannel(config_file).

3.8.2. Giving the channel a logical name

A channel can be given a logical name which is then used instead of the channel’s address in toString().

A logical name might show the function of a channel, e.g. "HostA-HTTP-Cluster", which is more legible

than a UUID 3c7e52ea-4087-1859-e0a9-77a0d2f69f29.

For example, when we have 3 channels, using logical names we might see a view {A,B,C}, which is nicer

than

{56f3f99e-2fc0-8282-9eb0-866f542ae437,ee0be4af-0b45-8ed6-3f6e-92548bfa5cde,

9241a071-10ce-a931-f675-ff2e3240e1ad}!

If no logical name is set, JGroups generates one, using the hostname and a random number, e.g.

linux-3442. If this is not desired and the UUIDs should be shown, use system property

-Djgroups.print_uuids=true.

The logical name can be set using:

public void setName(String logical_name);This must be done before connecting a channel. Note that the logical name stays with a channel until the channel is destroyed, whereas a UUID is created on each connection.

When JGroups starts, it prints the logical name and the associated physical address(es):

------------------------------------------------------------------- GMS: address=mac-53465, cluster=DrawGroupDemo, physical address=192.168.1.3:49932 -------------------------------------------------------------------

The logical name is mac-53465 and the physical address is 192.168.1.3:49932. The UUID is not shown here.

3.8.3. Generating custom addresses

Since 2.12 address generation is pluggable. This means that an application can determine what kind of

addresses it uses. The default address type is UUID, and since some protocols use UUID, it is

recommended to provide custom classes as subclasses of UUID.

This can be used to for example pass additional data around with an address, for example information about the location of the node to which the address is assigned. Note that methods equals(), hashCode() and compare() of the UUID super class should not be changed.

To use custom addresses, an implementation of org.jgroups.stack.AddressGenerator

has to be written.

For any class CustomAddress, it will need to get registered with the ClassConfigurator in order to marshal it correctly:

class CustomAddress extends UUID {

static {

ClassConfigurator.add((short)8900, CustomAddress.class);

}

}|

|

Note that the ID should be chosen such that it doesn’t collide with any IDs defined in

jg-magic-map.xml.

|

Set the address generator in JChannel: setAddressGenerator(AddressGenerator). This has to

be done before the channel is connected.

An example of a subclass is org.jgroups.util.PayloadUUID, and there are two more shipped with JGroups.

3.8.4. Joining a cluster

When a client wants to join a cluster, it connects to a channel giving the name of the cluster to be joined:

public void connect(String cluster) throws Exception;The cluster name is the name of the cluster to be joined. All channels that call connect() with

the same name form a cluster. Messages sent on any channel in the cluster will be received by all

members (including the one who sent it.

|

|

Local delivery can be turned off using setDiscardOwnMessages(true).

|

The connect() method returns as soon as the cluster has been joined successfully. If the channel is in

the closed state (see channel states), an exception will be thrown. If there are

no other members, i.e. no other member has connected to a cluster with this name, then a new cluster is

created and the member joins it as first member. The first member of a cluster becomes its coordinator.

A coordinator is in charge of installing new views whenever the membership changes

3.8.5. Joining a cluster and getting the state in one operation

Clients can also join a cluster and fetch cluster state in one operation.

The best way to conceptualize the connect and fetch state connect method is to think of it as an

invocation of the regular connect() and getState() methods executed in succession. However, there are

several advantages of using the connect and fetch state connect method over the regular connect. First

of all, the underlying message exchange is heavily optimized, especially if the flush protocol is used.

But more importantly, from a client’s perspective, the connect() and fetch state operations become

one atomic operation.

public void connect(String cluster, Address target, long timeout) throws Exception;Just as in a regular connect(), the cluster name represents a cluster to be joined. The target parameter indicates a cluster member to fetch the state from. A null target indicates that the state should be fetched from the cluster coordinator. If the state should be fetched from a particular member other than the coordinator, clients can simply provide the address of that member. The timeout paremeter bounds the entire join and fetch operation. An exception will be thrown if the timeout is exceeded.

3.8.6. Getting the local address and the cluster name

Method getAddress() returns the address of the channel. The address may or may

not be available when a channel is in the unconnected state.

public Address getAddress();Method getClusterName() returns the name of the cluster which the member joined.

public String getClusterName();Again, the result is undefined if the channel is in the disconnected or closed state.

3.8.7. Getting the current view

The following method can be used to get the current view of a channel:

public View getView();This method returns the current view of the channel. It is updated every time a new view is

installed (viewAccepted() callback).

Calling this method on an unconnected or closed channel is implementation defined. A channel may return null, or it may return the last view it knew of.

3.8.8. Sending messages

Once the channel is connected, messages can be sent using one of the send() methods:

public void send(Message msg) throws Exception;

public void send(Address dst, Serializable obj) throws Exception;

public void send(Address dst, byte[] buf) throws Exception;

public void send(Address dst, byte[] buf, int off, int len) throws Exception;The first send() method has only one argument, which is the message to be sent.

The message’s destination should either be the address of the receiver (unicast) or null (multicast).

When the destination is null, the message will be sent to all members of the cluster (including itself).

The remainaing send() methods are helper methods; they take either a byte[]

buffer or a serializable, create a Message and call send(Message).

If the channel is not connected, or was closed, an exception will be thrown upon attempting to send a message.

Here’s an example of sending a message to all members of a cluster:

Map data; // any serializable data

channel.send(null, data);The null value as destination address means that the message will be sent to all members in the cluster.

The payload is a hashmap, which will be serialized into the message’s buffer and unserialized at the

receiver. Alternatively, any other means of generating a byte buffer and setting the message’s buffer

to it (e.g. using Message.setBuffer()) also works.

Here’s an example of sending a unicast message to the first member (coordinator) of a group:

Map data;

Address receiver=channel.getView().getMembers().get(0);

channel.send(receiver, "hello world");The sample code determines the coordinator (first member of the view) and sends it a "hello world" message.

Discarding one’s own messages

Sometimes, it is desirable not to have to deal with one’s own messages, ie. messages sent by oneself.

To do this, JChannel.setDiscardOwnMessages(boolean flag) can be set to

true (false by default). This means that every cluster node will receive a message sent

by P, but P itself won’t.

Note that this method replaces the old JChannel.setOpt(LOCAL, false) method, which was removed in 3.0.

Synchronous messages

While JGroups guarantees that a message will eventually be delivered at all non-faulty members, sometimes this might take a while. For example, if we have a retransmission protocol based on negative acknowledgments, and the last message sent is lost, then the receiver(s) will have to wait until the stability protocol notices that the message has been lost, before it can be retransmitted.

This can be changed by setting the Message.RSVP flag in a message: when this flag is encountered,

the message send blocks until all members have acknowledged reception of the message (of course

excluding members which crashed or left meanwhile).

This also serves as another purpose: if we send an RSVP-tagged message, then - when the send() returns - we’re guaranteed that all messages sent before will have been delivered at all members as well. So, for example, if P sends message 1-10, and marks 10 as RSVP, then, upon JChannel.send() returning, P will know that all members received messages 1-10 from P.

Note that since RSVP’ing a message is costly, and might block the sender for a while, it should be used sparingly. For example, when completing a unit of work (ie. member P sending N messages), and P needs to know that all messages were received by everyone, then RSVP could be used.

To use RSVP, 2 things have to be done:

First, the RSVP protocol has to be in the config, somewhere above the reliable transmission

protocols such as NAKACK or UNICAST(2), e.g.:

<config>

<UDP/>

<PING />

<FD_ALL/>

<pbcast.NAKACK use_mcast_xmit="true"

discard_delivered_msgs="true"/>

<UNICAST timeout="300,600,1200"/>

<RSVP />

<pbcast.STABLE stability_delay="1000" desired_avg_gossip="50000"

max_bytes="4M"/>

<pbcast.GMS print_local_addr="true" join_timeout="3000"

view_bundling="true"/>

...

</config>Secondly, the message we want to get ack’ed must be marked as RSVP:

Message msg=new Message(null, null, "hello world");

msg.setFlag(Message.RSVP);

ch.send(msg);Here, we send a message to all cluster members (dest == null). (Note that RSVP also works for sending

a message to a unicast destination). Method send() will return as soon as it has received acks from

all current members. If there are 4 members A, B, C and D, and A has received acks from itself, B

and C, but D’s ack is missing and D crashes before the timeout kicks in, then this will

nevertheless make send() return, as if D had actually sent an ack.

If the timeout property is greater than 0, and we don’t receive all acks within

timeout milliseconds, a TimeoutException will be thrown (if RSVP.throw_exception_on_timeout is true).

The application can choose to catch this (runtime) exception and do something with it, e.g. retry.

The configuration of RSVP is described here: RSVP.

|

|

RSVP was added in version 3.1. |

Non blocking RSVP

Sometimes a sender wants a given message to be resent until it has been received, or a timeout occurs, but doesn’t want

to block. As an example, RpcDispatcher.callRemoteMethodsWithFuture() needs to return immediately, even if the results

aren’t available yet. If the call options contain flag RSVP, then the future would only be returned once all

responses have been received. This is clearly undesirable behavior.

To solve this, flag RSVP_NB (non-blocking) can be used. This has the same behavior as RSVP, but the caller is not

blocked by the RSVP protocol. When a timeout occurs, a warning message will be logged, but since the caller doesn’t

block, the call won’t throw an exception.

3.8.9. Receiving messages

Method receive() in ReceiverAdapter (or Receiver) can be overridden to

receive messages, views, and state transfer callbacks.

public void receive(Message msg);A Receiver can be registered with a channel using JChannel.setReceiver(). All received messages, view

changes and state transfer requests will invoke callbacks on the registered Receiver:

JChannel ch=new JChannel();

ch.setReceiver(new ReceiverAdapter() {

public void receive(Message msg) {

System.out.println("received message " + msg);

}

public void viewAccepted(View view) {

System.out.println("received view " + new_view);

}

});

ch.connect("MyCluster");|

|

The semantics of receive(Message msg) will change slightly in 4.0: as the buffer of msg might get reused by

the transport (to reduce the memory allocation rate), the receive() method must consume the buffer

(e.g. de-serialize it into an application object), or make a copy.

As soon as receive() returns, the message’s buffer might get overwritten with new data.

|

3.8.10. Receiving view changes

As shown above, the viewAccepted() callback of ReceiverAdapter can be used

to get callbacks whenever a cluster membership change occurs. The receiver needs to be set via

JChannel.setReceiver(Receiver).

As discussed in ReceiverAdapter, code in callbacks must avoid anything that takes a lot of time, or blocks; JGroups invokes this callback as part of the view installation, and if this user code blocks, the view installation would block, too.

3.8.11. Getting the group’s state

A newly joined member may want to retrieve the state of the cluster before starting work. This is done

with getState():

public void getState(Address target, long timeout) throws Exception;This method returns the state of one member (usually of the oldest member, the coordinator). The target parameter can usually be null, to ask the current coordinator for the state. If a timeout (ms) elapses before the state is fetched, an exception will be thrown. A timeout of 0 waits until the entire state has been transferred.

|

|

The reason for not directly returning the state as a result of getState() is that the state has to be returned in the correct position relative to other messages. Returning it directly would violate the FIFO properties of a channel, and state transfer would not be correct! |

To participate in state transfer, both state provider and state requester have to implement the following callbacks from ReceiverAdapter (Receiver):

public void getState(OutputStream output) throws Exception;

public void setState(InputStream input) throws Exception;Method getState() is invoked on the state provider (usually the coordinator). It

needs to write its state to the output stream given. Note that output doesn’t need to be closed when

done (or when an exception is thrown); this is done by JGroups.

The setState() method is invoked on the state requester; this is the member

which called JChannel.getState(). It needs to read its state from the input stream and set its

internal state to it. Note that input doesn’t need to be closed when

done (or when an exception is thrown); this is done by JGroups.

In a cluster consisting of A, B and C, with D joining the cluster and calling Channel.getState(), the

following sequence of callbacks happens:

-

D calls

JChannel.getState(). The state will be retrieved from the oldest member, A -

A’s

getState()callback is called. A writes its state to the output stream passed as a parameter togetState(). -

D’s

setState()callback is called with an input stream as argument. D reads the state from the input stream and sets its internal state to it, overriding any previous data. -

D:

JChannel.getState()returns. Note that this will only happen after the state has been transferred successfully, or a timeout elapsed, or either the state provider or requester throws an exception. Such an exception will be re-thrown bygetState(). This could happen for instance if the state provider’sgetState()callback tries to stream a non-serializable class to the output stream.

The following code fragment shows how a group member participates in state transfers:

public void getState(OutputStream output) throws Exception {

synchronized(state) {

Util.objectToStream(state, new DataOutputStream(output));

}

}

public void setState(InputStream input) throws Exception {

List<String> list;

list=(List<String>)Util.objectFromStream(new DataInputStream(input));

synchronized(state) {

state.clear();

state.addAll(list);

}

System.out.println(list.size() + " messages in chat history):");

for(String str: list)

System.out.println(str);

}This code is the Chat example from the JGroups tutorial and the state here is a list of strings.

The getState() implementation synchronized on the state (so no incoming messages can modify it during

the state transfer), and uses the JGroups utility method objectToStream().

The setState() implementation also uses the Util.objectFromStream() utility method to read the state from

the input stream and assign it to its internal list.

State transfer protocols

In order to use state transfer, a state transfer protocol has to be included in the configuration.

This can either be STATE_TRANSFER, STATE, or STATE_SOCK. More details on the protocols can

be found in the protocols list section.

This is the original state transfer protocol, which used to transfer byte[] buffers. It still does

that, but is internally converted to call the getState() and setState() callbacks which use

input and output streams.

Note that, because byte[] buffers are converted into input and output streams, this protocol

should not be used for transfer of large states.

For details see pbcast.STATE_TRANSFER.

This is the STREAMING_STATE_TRANSFER protocol, renamed in 3.0. It sends the entire state

across from the provider to the requester in (configurable) chunks, so that memory consumption

is minimal.

For details see pbcast.STATE.

Same as STREAMING_STATE_TRANSFER, but a TCP connection between provider and requester is

used to transfer the state.

For details see STATE_SOCK.

3.8.12. Disconnecting from a channel

Disconnecting from a channel is done using the following method:

public void disconnect();It will have no effect if the channel is already in the disconnected or closed state. If connected, it will leave the cluster. This is done (transparently for a channel user) by sending a leave request to the current coordinator. The latter will subsequently remove the leaving node from the view and install a new view in all remaining members.

After a successful disconnect, the channel will be in the unconnected state, and may subsequently be reconnected.

3.8.13. Closing a channel

To destroy a channel instance (destroy the associated protocol stack, and release all resources),

method close() is used:

public void close();Closing a connected channel disconnects the channel first.

The close() method moves the channel to the closed state, in which no further operations are allowed (most throw an exception when invoked on a closed channel). In this state, a channel instance is not considered used any longer by an application and — when the reference to the instance is reset — the channel essentially only lingers around until it is garbage collected by the Java runtime system.

4. Building Blocks

Building blocks are layered on top of channels, and can be used instead of channels whenever a higher-level interface is required.

Whereas channels are simple socket-like constructs, building blocks may offer a far more sophisticated

interface. In some cases, building blocks offer access to the underlying channel, so that — if the building

block at hand does not offer a certain functionality — the channel can be accessed directly. Building blocks

are located in the org.jgroups.blocks package.

4.1. MessageDispatcher

Channels are simple patterns to asynchronously send and receive messages. However, a significant number of communication patterns in group communication require synchronous communication. For example, a sender would like to send a message to the group and wait for all responses. Or another application would like to send a message to the group and wait only until the majority of the receivers have sent a response, or until a timeout occurred.

MessageDispatcher provides blocking (and non-blocking) request sending and response correlation. It offers synchronous (as well as asynchronous) message sending with request-response correlation, e.g. matching one or multiple responses with the original request.

An example of using this class would be to send a request message to all cluster members, and block until all responses have been received, or until a timeout has elapsed.

Contrary to RpcDispatcher, MessageDispatcher deals with sending message requests and correlating message responses, while RpcDispatcher deals with invoking method calls and correlating responses. RpcDispatcher extends MessageDispatcher, and offers an even higher level of abstraction over MessageDispatcher.

RpcDispatcher is essentially a way to invoke remote procedure calls (RCs) across a cluster.

Both MessageDispatcher and RpcDispatcher sit on top of a channel; therefore an instance of

MessageDispatcher is created with a channel as argument. It can now be

used in both client and server role: a client sends requests and receives responses and

a server receives requests and sends responses. MessageDispatcher allows for an

application to be both at the same time. To be able to serve requests in the server role, the

RequestHandler.handle() method has to be implemented:

Object handle(Message msg) throws Exception;The handle() method is called whenever a request is received. It must return a value

(must be serializable, but can be null) or throw an exception. The returned value will be sent to the sender,

and exceptions are also propagated to the sender.

Before looking at the methods of MessageDispatcher, let’s take a look at RequestOptions first.

4.1.1. RequestOptions

Every message sending in MessageDispatcher or request invocation in RpcDispatcher is governed by an instance of RequestOptions. This is a class which can be passed to a call to define the various options related to the call, e.g. a timeout, whether the call should block or not, the flags (see Tagging messages with flags) etc.

The various options are:

-

Response mode: this determines whether the call is blocking and - if yes - how long it should block. The modes are:

GET_ALL-

Block until responses from all members (minus the suspected ones) have been received.

GET_NONE-

Wait for none. This makes the call non-blocking

GET_FIRST-

Block until the first response (from anyone) has been received

GET_MAJORITY-

Block until a majority of members have responded

-

Timeout: number of milliseconds we’re willing to block. If the call hasn’t terminated after the timeout elapsed, a TimeoutException will be thrown. A timeout of 0 means to wait forever. The timeout is ignored if the call is non-blocking (mode=

GET_NONE) -

Anycasting: if set to true, this means we’ll use unicasts to individual members rather than sending multicasts. For example, if we have have TCP as transport, and the cluster is {A,B,C,D,E}, and we send a message through MessageDispatcher where dests={C,D}, and we do not want to send the request to everyone, then we’d set anycasting=true. This will send the request to C and D only, as unicasts, which is better if we use a transport such as TCP which cannot use IP multicasting (sending 1 packet to reach all members).

-

Response filter: A RspFilter allows for filtering of responses and user-defined termination of a call. For example, if we expect responses from 10 members, but can return after having received 3 non-null responses, a RspFilter could be used. See Response filters for a discussion on response filters.

-

Scope: a short, defining a scope. This allows for concurrent delivery of messages from the same sender. See Scopes: concurrent message delivery for messages from the same sender for a discussion on scopes.

-

Flags: the various flags to be passed to the message, see the section on message flags for details.

-

Exclusion list: here we can pass a list of members (addresses) that should be excluded. For example, if the view is A,B,C,D,E, and we set the exclusion list to A,C then the caller will wait for responses from everyone except A and C. Also, every recipient that’s in the exclusion list will discard the message.

An example of how to use RequestOptions is:

RpcDispatcher disp;

RequestOptions opts=new RequestOptions(Request.GET_ALL)

.setFlags(Message.NO_FC, Message.DONT_BUNDLE);

Object val=disp.callRemoteMethod(target, method_call, opts);The methods to send requests are:

public <T> RspList<T>

castMessage(final Collection<Address> dests, Message msg,

RequestOptions options) throws Exception;

public <T> NotifyingFuture<RspList<T>>

castMessageWithFuture(final Collection<Address> dests, Message msg,

RequestOptions options) throws Exception;

public <T> T sendMessage(Message msg, RequestOptions opts) throws Exception;

public <T> NotifyingFuture<T>

sendMessageWithFuture(Message msg, RequestOptions options) throws Exception;castMessage() sends a message to all members defined in

dests. If dests is null, the message will be sent to all

members of the current cluster. Note that a possible destination set in the message will be overridden.

If a message is sent synchronously (defined by options.mode) then options.timeout

defines the maximum amount of time (in milliseconds) to wait for the responses.

castMessage() returns a RspList, which contains a map of addresses and Rsps;

there’s one Rsp per member listed in dests.

A Rsp instance contains the response value (or null), an exception if the target handle() method threw

an exception, whether the target member was suspected, or not, and so on. See the example below for

more details.

castMessageWithFuture() returns immediately, with a future. The future

can be used to fetch the response list (now or later), and it also allows for installation of a callback

which will be invoked whenever the future is done.

See Asynchronous calls with futures for details on how to use NotifyingFutures.

sendMessage() allows an application programmer to send a unicast message to a

single cluster member and receive the response. The destination of the message has to be non-null (valid

address of a member). The mode argument is ignored (it is by default set to

ResponseMode.GET_FIRST) unless it is set to GET_NONE in which case

the request becomes asynchronous, ie. we will not wait for the response.

sendMessageWithFuture() returns immediately with a future, which can be used to

fetch the result.

One advantage of using this building block is that failed members are removed from the set of expected responses. For example, when sending a message to 10 members and waiting for all responses, and 2 members crash before being able to send a response, the call will return with 8 valid responses and 2 marked as failed. The return value of castMessage() is a RspList which contains all responses (not all methods shown):

public class RspList<T> implements Map<Address,Rsp> {

public boolean isReceived(Address sender);

public int numSuspectedMembers();

public List<T> getResults();

public List<Address> getSuspectedMembers();

public boolean isSuspected(Address sender);

public Object get(Address sender);

public int size();

}isReceived() checks whether a response from sender

has already been received. Note that this is only true as long as no response has yet been received, and the

member has not been marked as failed. numSuspectedMembers() returns the number of

members that failed (e.g. crashed) during the wait for responses. getResults()

returns a list of return values. get() returns the return value for a specific member.

4.1.2. Requests and target destinations

When a non-null list of addresses is passed (as the destination list) to MessageDispatcher.castMessage() or

RpcDispatcher.callRemoteMethods(), then this does not mean that only the members

included in the list will receive the message, but rather it means that we’ll only wait for responses from

those members, if the call is blocking.

If we want to restrict the reception of a message to the destination members, there are a few ways to do this:

-

If we only have a few destinations to send the message to, use several unicasts.

-

Use anycasting. E.g. if we have a membership of

{A,B,C,D,E,F}, but only want A and C to receive the message, then set the destination list to A and C and enable anycasting in the RequestOptions passed to the call (see above). This means that the transport will send 2 unicasts. -

Use exclusion lists. If we have a membership of

{A,B,C,D,E,F}, and want to send a message to almost all members, but exclude D and E, then we can define an exclusion list: this is done by settting the destination list tonull(= send to all members), or to{A,B,C,D,E,F}and set the exclusion list in the RequestOptions passed to the call to D and E.

4.1.3. Example

This section shows an example of how to use a MessageDispatcher.

public class MessageDispatcherTest implements RequestHandler {

Channel channel;

MessageDispatcher disp;

RspList rsp_list;

String props; // to be set by application programmer

public void start() throws Exception {

channel=new JChannel(props);

disp=new MessageDispatcher(channel, null, null, this);

channel.connect("MessageDispatcherTestGroup");

for(int i=0; i < 10; i++) {

Util.sleep(100);

System.out.println("Casting message #" + i);

rsp_list=disp.castMessage(null,

new Message(null, null, new String("Number #" + i)),

ResponseMode.GET_ALL, 0);

System.out.println("Responses:\n" +rsp_list);

}

channel.close();

disp.stop();

}

public Object handle(Message msg) throws Exception {

System.out.println("handle(): " + msg);

return "Success!";

}

public static void main(String[] args) {

try {

new MessageDispatcherTest().start();

}

catch(Exception e) {

System.err.println(e);

}

}

}The example starts with the creation of a channel. Next, an instance of MessageDispatcher is created on top of the channel. Then the channel is connected. The MessageDispatcher will from now on send requests, receive matching responses (client role) and receive requests and send responses (server role).

We then send 10 messages to the group and wait for all responses. The timeout argument is 0, which causes the call to block until all responses have been received.

The handle() method simply prints out a message and returns a string. This will

be sent back to the caller as a response value (in Rsp.value). Has the call thrown an exception,

Rsp.exception would be set instead.

Finally both the MessageDispatcher and channel are closed.

4.2. RpcDispatcher

RpcDispatcher is derived from MessageDispatcher. It allows a

programmer to invoke remote methods in all (or single) cluster members and optionally wait for the return